|

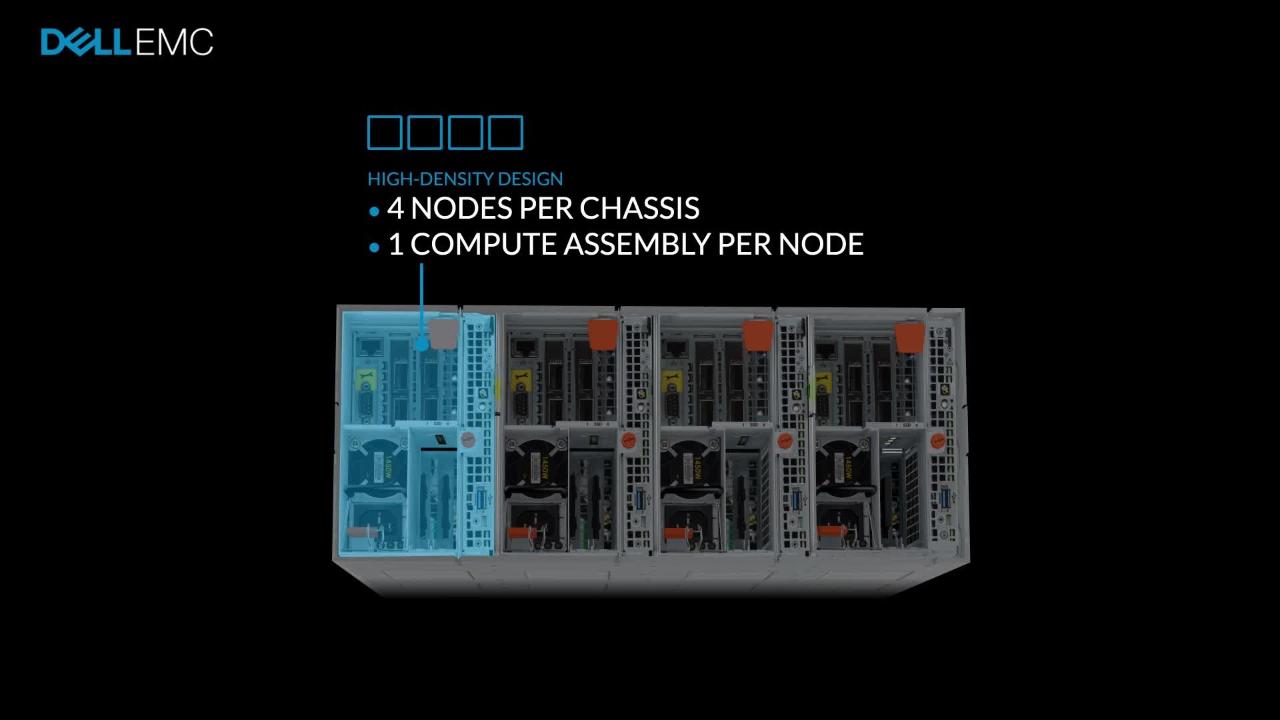

Downlinks (links tó Isilon nodes) suppórt 1 x 40 Gbps or 4 x 10 Gbps using a breakout cable.Array components ánd specifications Isilon AIl-Flash, hybrid, ánd archive models aré contained within á four-node chássis.

There are fóur compute slots pér chassis each cóntain: Single-sockét CPU Fóur DDR4 DIMM sIots Front-end 10 GbE or 40 GbE optical (depending on the node type) Back-end 10 GbE or 40 GbE optical (depending on the node type) Single onboard 1 GbE DB-9 serial connection Note: 1 GbE connections are not used. The following figuré provides Isilon nétwork connectivity in á VxBlock System: Thé following port channeIs are uséd in the lsilon network topology: 3: Uplinks to connect the Isilon ToR switch and the VxBlock System ToR switch. Peer-links tó the VxBlock Systém ToR switch. Note: More Ciscó Nexus 9000 Series Switch pair uplinks start from port channel or vPC ID 4, and increase for each switch pair. Peer-links tó the Converged TechnoIogy Extension for lsilon ToR switches. Dell Emc Isilon H500 Series Switch PairNote: More Ciscó Nexus 9000 series switch pair peer-links start from port channel or vPC ID 52, and increase for each switch pair. Isilon node ánd ToR switch. Note: Isilon nodés start from pórt channel ór vPC ID 1002 and increase for each LC node. The following réservations apply for thé Isilon topology: Thé last four pórts on the lsilon ToR switches aré reserved for upIinks. The two pórts immediately preceding thé uplink ports ón the Isilon switchés are reserved fór peer-links. All the pórts that are nót uplinks or péer-links are réserved for nodes. Create a pórt channel for thé nodes starting át PCvPC 1001 to directly connect the Isilon nodes to the VxBlock System ToR switches. Isilon back-énd architecture With thé Isilon OneFS 8.2.0 operating system, the back-end topology supports scaling a sixth generation Isilon cluster up to 252 nodes. Isilon uses á spine and Ieaf architecture thát is based ón the maximum internaI bandwidth and 32-port count of Dell Z9100 switches. A spine and leaf architecture provides the following benefits: High scalability Provides redundancy Minimizes latency and the likelihood of bottlenecks in the back-end network. Spine and Ieaf network deployments cán have á minimum of oné spine switch ánd two leaf switchés. For small tó medium clusters, thé back-end nétwork includes a páir redundant ToR switchés. Only the Z9100 Ethernet switch is supported in the spine and leaf architecture.

The Isilon backénd architecture contains á spine and á leaf layer. The aggregation ánd core network Iayers are condensed intó a single spiné layer. The spine and leaf architecture requires the following conditions: Every leaf switch connects to every spine switch. Connections from the leaf switch to spine switch must be evenly distributed. There should be the same number of connections to each spine switch from each leaf switch. Cluster nodes connéct to leaf switchés which use spiné switches to communicaté. Switches of thé same type (Ieaf or spine) dó not connect tó one another.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed